1.4 KiB

1.4 KiB

Experiments with Stable Diffusion

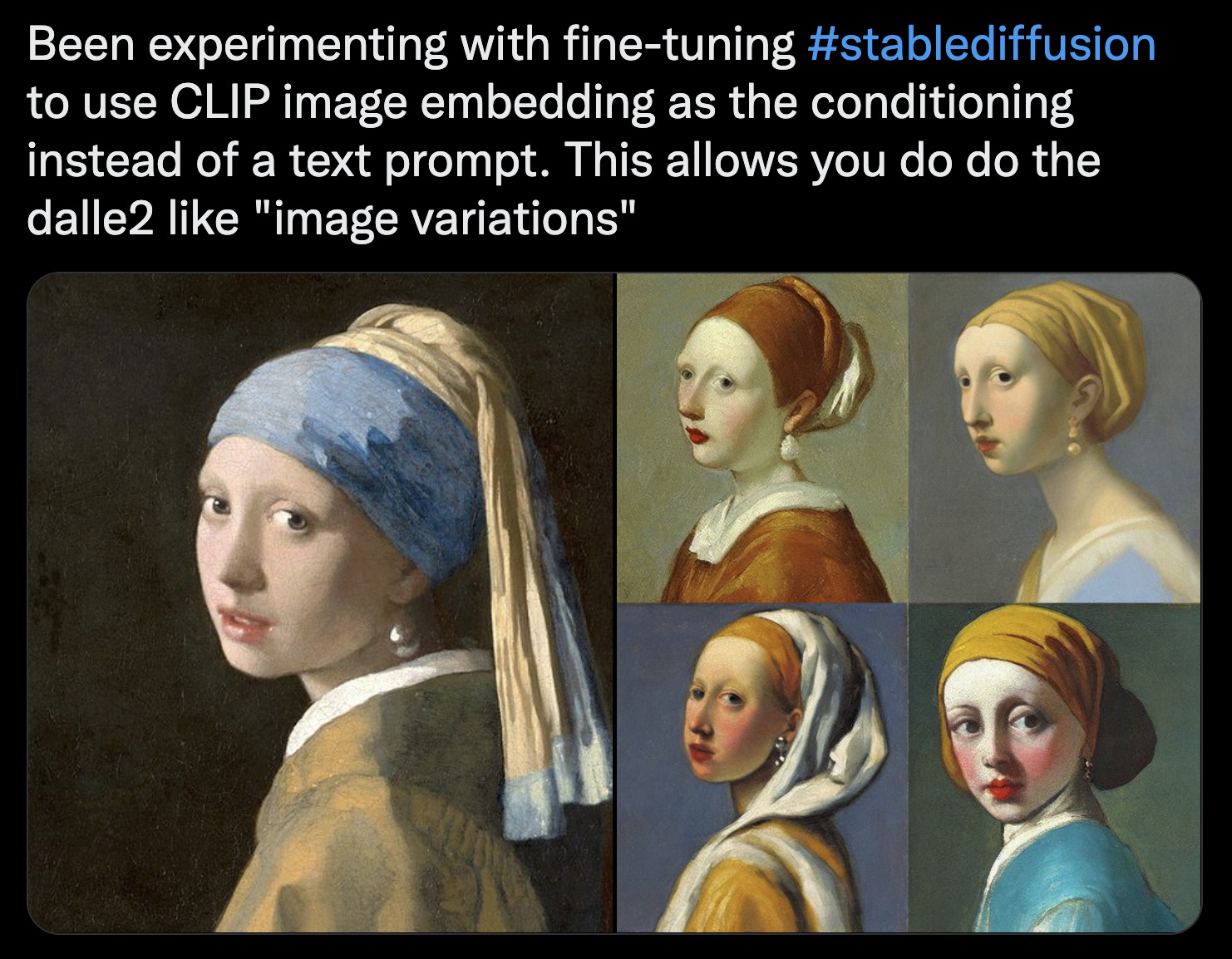

Image variations

TODO describe in more detail

- Get access to a Linux machine with a decent NVIDIA GPU (e.g. on Lambda GPU Cloud)

- Clone this repo

- Make sure PyTorch is installed and then install other requirements:

pip install -r requirements.txt - Get model from huggingface hub lambdalabs/stable-diffusion-image-conditioned

- Put model in

models/ldm/stable-diffusion-v1/sd-clip-vit-l14-img-embed_ema_only.ckpt - Run

scripts/image_variations.pyorscripts/gradio_variations.py

All together:

git clone https://github.com/justinpinkney/stable-diffusion.git

cd stable-diffusion

mkdir -p models/ldm/stable-diffusion-v1

wget https://huggingface.co/lambdalabs/stable-diffusion-image-conditioned/resolve/main/sd-clip-vit-l14-img-embed_ema_only.ckpt -O models/ldm/stable-diffusion-v1/sd-clip-vit-l14-img-embed_ema_only.ckpt

pip install -r requirements.txt

python scripts/gradio_variations.py

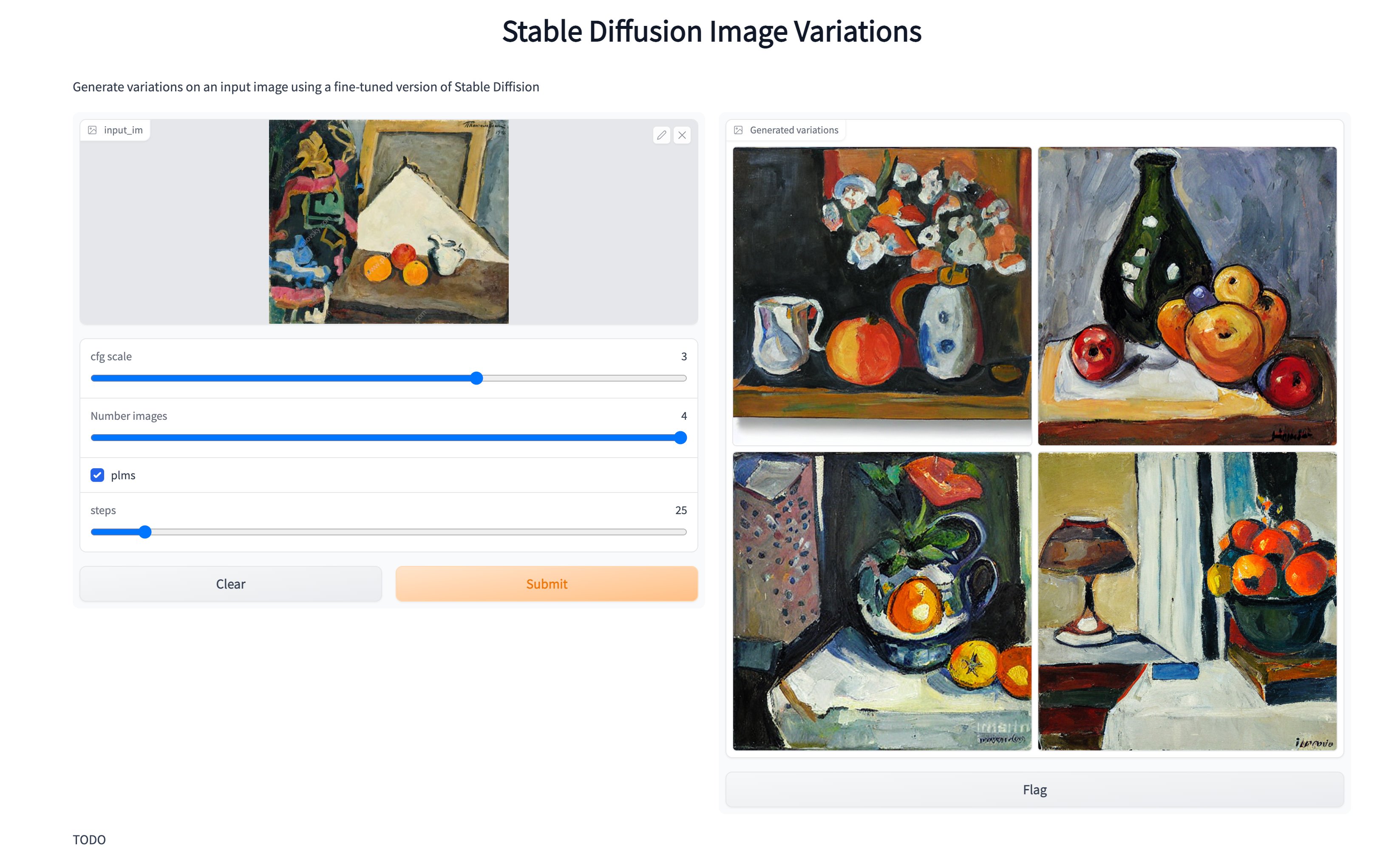

Then you should see this:

Trained by Justin Pinkney (@Buntworthy) at Lambda